The blank page seems bigger with LLMs when you invite them into your file explorer. How do you go from zero to one by starting with something interesting and then end up with an app? Most people start the same way they use Claude or ChatGPT in the browser: by asking questions.

That works to a point, until you want to build a more complicated application or process, because of context rot. After a certain period of time, the LLM runs out of space and you’re starting again from square one, explaining to a forgetful intern what you were working on.

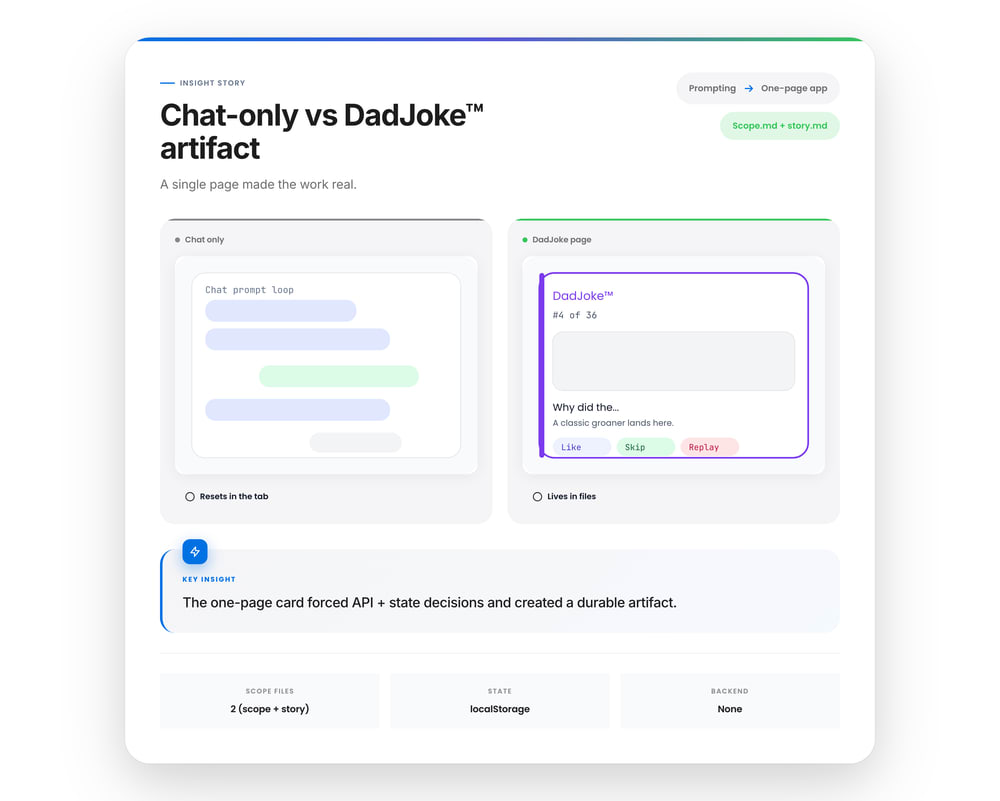

When you try to move from “could this work” to “does this exist”, this aspect of chat becomes a liability. Every answer evaporates when the tb closes, except for the plan files that get created when you run Claude or Codex in plan mode.

Getting value out of these tools requires a simple but non-obvious shift. Instead of asking questions on a web chat, embded the chat experience inside your workspace.

The easiest way to do that is to begin with a one-page website.

Chat (alone) is the wrong default for builders

Chat is great for planning, and not as good for progress. They reward clever prompts, long explanations, and theoretical completeness. That’s useful for learning, but it doesn’t help you build. There’s no persistent artifact, no shared ground truth, and no natural pressure to refactor or decide.

You build consistency and by creating a system that supports building. Start with a plan and then create:

-

files

-

structure

-

constraints

-

tradeoffs you can point to later

When you isolate “chat with an LLM” inside a file-based IDE and give it concrete artifacts to operate on, the model behaves differently than it does when you use it on the web interface. You go into planning, evaluation, and thinking mode … and then build.

The one-page website as a universal starting block

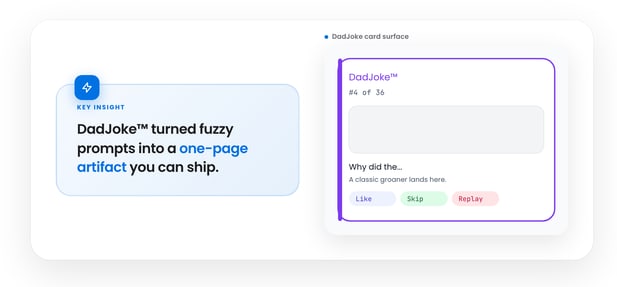

If you strip away tooling debates and framework preferences, most early projects fail for a simpler reason: they never become real enough to push back. A one-page website solves that immediately.

It forces an idea to cross a boundary — from imagined to instantiated — without demanding commitment to a backend, a framework, or a long-term architecture. You get something that runs, renders, and responds, while remaining cheap to abandon or reshape.

A single page collapses several early decisions into one artifact:

-

what the product is, in plain language

-

what a user can do, even if it’s trivial

-

what feedback looks like, visually and interactively

From an LLM’s perspective, this is an ideal unit of work. The page is small enough to fit in context, concrete enough to reason about holistically, and visual enough that mistakes are obvious.

You’re no longer asking the model to design something abstract. You’re asking it to change a thing that already exists.

Start small by building a single card

Most “getting started” demos accidentally teach the wrong lesson.

They start with serious-looking software like charts, tiles, toggles, and settings. It looks like software, but it doesn’t feel like a single artifact to review.

A better metaphor is a collectible card. One joke. One card. One unit of work.

Using a dumb idea like a joke card is a solid constraint and a tight visual story. It also matches how people actually adopt tools. They want a small win that feels finished, not a scaffolding project that “will be great later.”

The card format forces the parts that matter:

-

a title

-

a clear body

-

an image window (even if it’s a placeholder)

-

a brand tag

-

a number (“#4 of 36”)

-

a few simple signals

That’s enough to feel like a product.

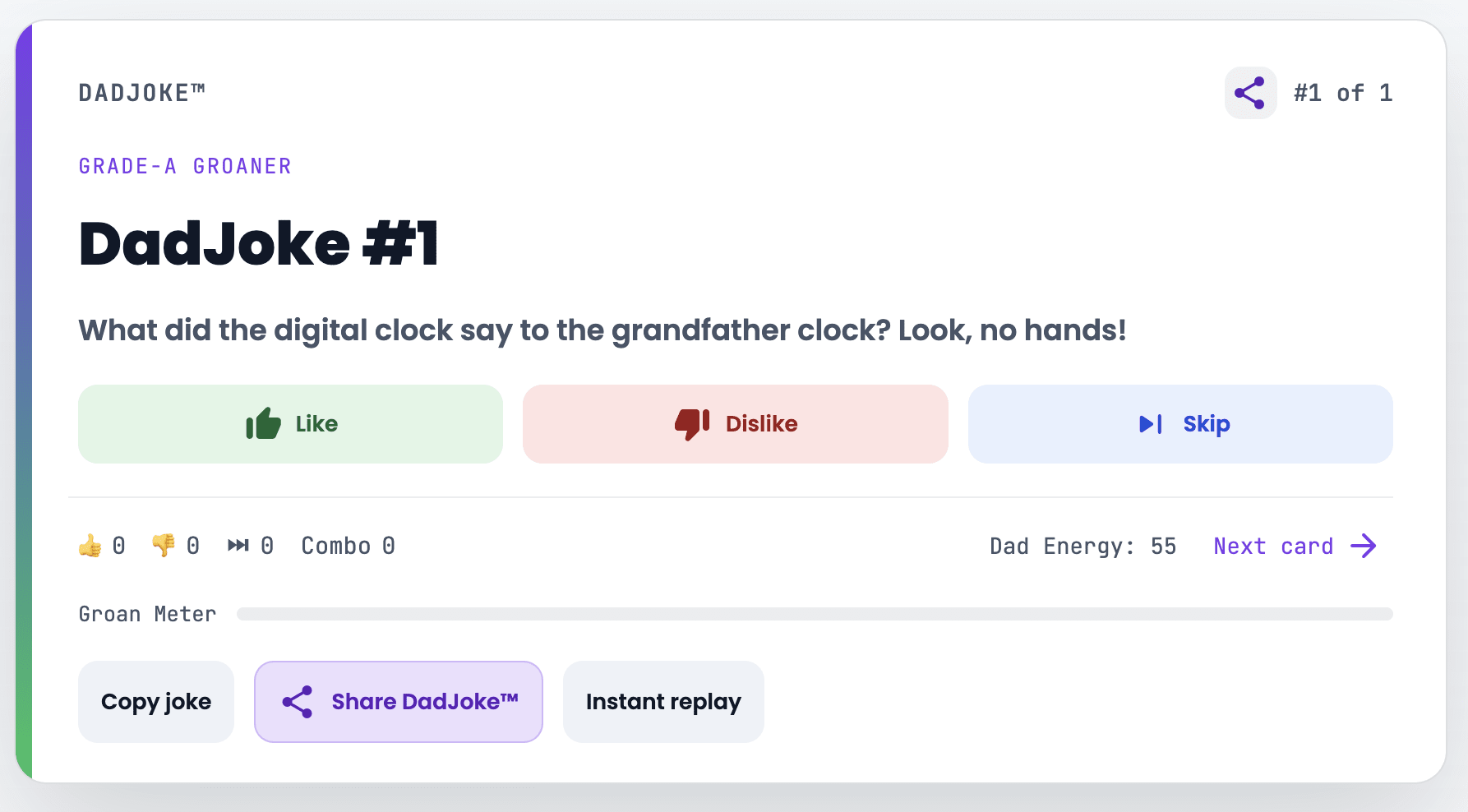

The dad joke card (API-only, no backend)

Let’s try this out with a smallish project: a one-page dad joke card. Not just because jokes are fun, but because the shape of the problem matches what we want to solve.

The page does almost nothing:

-

fetches a random dad joke from an external API

-

displays it on a collectible-style card

-

shows a card number (“#4 of 36”) and a brand tag (“DadJoke”)

-

lets the user choose:

-

👍 like

-

👎 dislike

-

⏭ skip

-

-

shows simple stats (liked / disliked / skipped)

-

has a “next card” action

There’s no backend. No database. No server. Just a single page making an API call.

And yet, this already forces real product decisions:

-

What does “skip” mean? Is it neutral or negative?

-

What happens when the same joke appears twice?

-

Do you treat “no response” as “unrated” instead of “dislike”?

-

Where does state live (memory vs localStorage)?

-

What is the smallest UI that still feels complete?

Those decisions live in the files, so when the chat fails, it has a clear way to recover.

Scope and story live outside the prompt

Here’s how to start. Before writing any code, create two small markdown files:

-

scope.md: what this is and, more importantly, what it isn’t -

story.md: the user flow and what “done” looks like

When you ask the LLM to help you build, it’s not guessing your goals from a prompt. It’s extending a shared plan. The conversation becomes subordinate to the artifacts, which is exactly what you want.

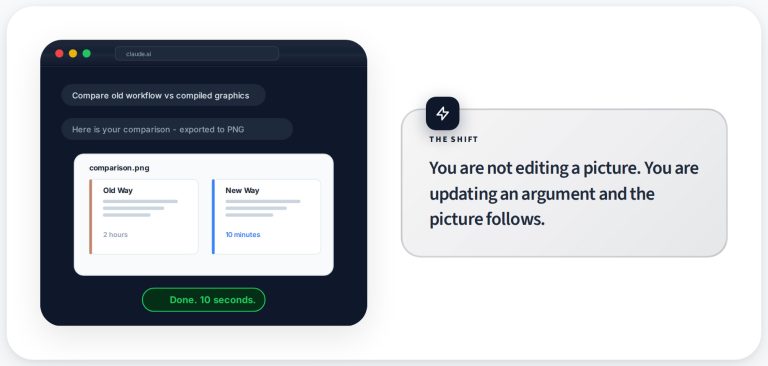

Let constraints teach you architecture

As the page evolves, something predictable happens: the JavaScript starts to sprawl.

State handling, API calls, UI updates, edge cases—they all pile into one place. Somewhere around a few hundred lines, working on it stops being fun.

You don’t refactor because “best practices” say so. You refactor because the artifact demands it. Splitting logic into smaller files stops being academic advice and becomes relief.

This is how architecture should be learned: as a response to pressure, not as a prerequisite.

SPOILER ALERT: the LLM thrives here. With smaller, clearer files, it can reason locally.

Why stopping early is the point

This example intentionally stops at a one-page site plus an external API. That’s not because those things don’t matter. It’s because you don’t need them to learn how to build effectively with AI.

By the time this page works, you’ve already practiced the transferable skill:

-

scoping work explicitly

-

anchoring context in files

-

iterating without resetting progress

-

refactoring when complexity appears

The dad joke card isn’t a toy. It’s a complete learning loop with a clean exit.

If you’re getting started with AI coding tools and feeling stuck, the problem is rarely the model. It’s the environment you’ve put it in.

Chat is great for answers. Builders need artifacts. And DadJokes are fun!

What’s the takeaway? Start with something that runs. Keep it small enough to understand. Let constraints force decisions. And treat the model as a collaborator inside your workspace, not a voice in a tab.