Emoji seem like a small thing, but the ones that stick make it to the keyboard of every mobile phone in the world, so the process is pretty interesting in itself. Too-strong zoom on a selfie must be a universally understood image now.

When I was a kid, I wanted laser eyes.

Not metaphorical laser eyes. Actual comic-book-origin-story laser eyes. I read too much X-Men, saw Scott Summers vaporize a problem, and thought: yes, that seems useful.

That same feeling is the closest description I have for what using AI well feels like right now. You can summarize a market in minutes. You can turn a rough product idea into a working prototype by lunch. You can ask a model questions that used to require interrupting an engineer, a designer, or a data person.

The power is real. The confusion is real too.

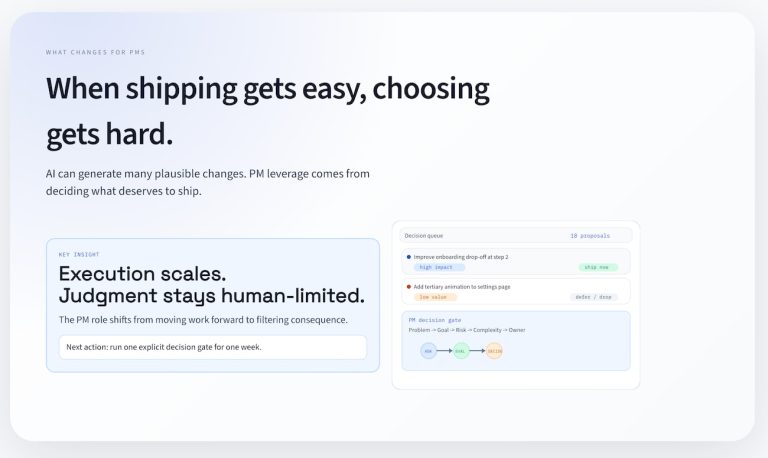

The mistake is thinking the new superpower is execution. It is not. The real shift is that execution got cheap enough that judgment became the bottleneck.

The bottleneck moved

Three years ago, I was building API calls into spreadsheets and thought I was discovering fire.

If I had an idea in 2022, one of the first questions was usually: who do I need to ask to make this real?

Now, if I have an idea that needs a scratch implementation, I can ask Claude, Cursor, or another coding agent to produce the first pass. I can get a UI, some glue code, a rough data model, and basic tests without opening the old queue of favors and dependencies.

That sounds like pure upside, and for a while, it feels like it. Then you live with it for a few weeks and realize you can generate more surface area than you can evaluate.

More screens. More branches. More speculative workflows. More “pretty close” solutions that still hide bad assumptions.

AI did not remove the need for product thinking. It increased the penalty for weak product thinking.

When output is expensive, bad ideas die early. When output is cheap, bad ideas multiply.

Why “Just Build It” stops working

The easiest trap with AI is treating it like an autopilot instead of an exoskeleton.

-

Autopilot thinking says: give the tool a goal, let it run, hope the result is good.

-

Exoskeleton thinking says: use the tool to amplify your strength, but keep your hands on direction, balance, and proof.

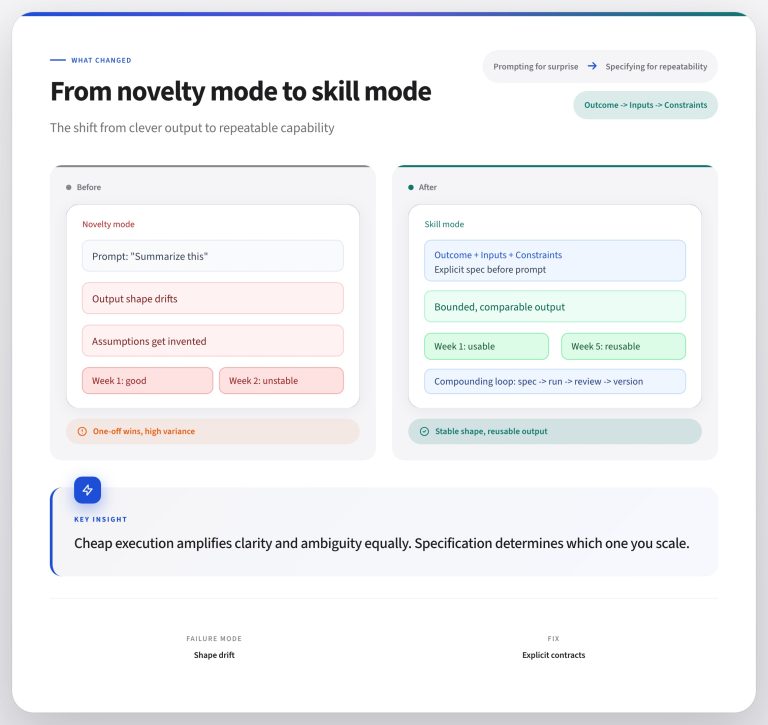

That distinction matters because AI is very good at extending motion. It is much less reliable at improving the quality of the plan.

If your framing is vague, the system will often produce a vague solution faster. If your acceptance criteria are weak, it will happily help you ship something that only looks complete.

The biggest shift for me this year has been realizing that the highest-leverage prompt in my workflow is not “build this.”

It is some variant of:

-

ask me clarifying questions,

-

then propose a plan,

-

then define the checks that would prove we succeeded.

Those are the magic words.

That is the difference between getting motion and getting traction.

A Concrete example in miniature

When I get a product idea, it’s usually something small enough to scratch quickly but important enough that I want to see it working. Maybe it’s a way to create graphics. Perhaps it’s a skill or a report that collapses three annoying steps into one.

Three years ago, the way I built this idea was to socialize the idea, wait for time, and hope it survived contact with the next sprint. I couldn’t build it myself, either because I needed help from a developer or I’d get stuck on some dumb syntax error before I made real progress.

Now I can sketch the flow in plain English in the morning and have a working branch by lunch. That part still feels a little absurd in the best way.

Here is the catch: if I stop there, I have a demo, not a decision.

I’m now starting to build features and recently I created a small change completely using vibe-coding. It looked right in the demo, and in the tests I thought I was running. When it made it to production, the feature didn’t work (syntax error, of course.) It was an easy fix but I missed it because I didn’t realize that my vibe-coding fix had created a mock test rather than testing with actual data in the API. I didn’t see what would happen because I hadn’t defined the success criteria with observable conditions.

A better loop looks like this:

-

Define the job to be done in one sentence.

-

Ask the model what assumptions are hidden in the request.

-

Set exit criteria before implementation starts.

-

Build the smallest version that can be tested.

-

Run checks against the criteria, not against vibes.

-

Decide whether to keep, revise, or kill the idea.

The AI accelerates steps 2 through 4. I still own steps 1, 5, and 6. Those are the parts that determine whether speed compounds or whether I just created more cleanup work for myself.

Work in Gates

The biggest improvement in my own workflow has not been “better writing prompts” or “better code generation.”

It has been treating AI work like an incremental delivery system with gates.

Every project starts with a plan, then becomes a series of stories. I build an example of the criteria for a story, then ask the agent to break the requirements into story-shaped chunks. But that doesn’t mean we’re ready to build. I imagine I’m working with a person, and keep working on each story until it sounds like the feature I want to build. Then I use tests, review checklists, and concrete acceptance criteria to verify the output before I move on.

That is basically the same kind of CI thinking that developers do but using it earlier in the process for the product development work. You create, edit, nudge, and build. The agent is not doing all of the work. Ideally, it is doing the next bounded piece while you hold the thread. (And doing the work that you hate the most, like fixing syntax.)

Instead of asking, “Can the model do the whole thing?” the better question is, “What proof do I need at each step before the next layer of complexity is allowed in?”

The same structure applies outside code. For strategy memos, research synthesis, or roadmap work, the gate questions look like this:

-

What claim is being made?

-

What evidence supports it?

-

What assumption would break it?

-

What decision changes if this is true?

Without that structure, AI gives you momentum without reliability. With it, AI becomes a serious leverage tool.

The new PM skill

The practical skill shift for PMs is straightforward: less energy spent translating ideas into tickets, more energy spent designing evaluation loops.

The old advantage was knowing how to get scarce builders pointed at the right problem.

The new advantage is knowing how to turn abundant generation into trustworthy progress.

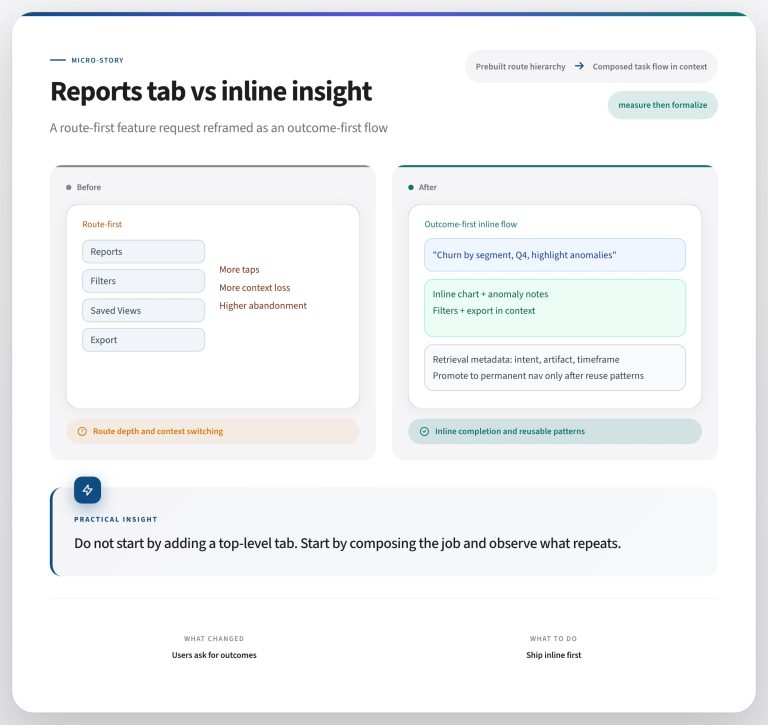

The difference shows up in how you frame the work. The old version: “Can we add export to the onboarding flow?” The new version: “What would need to be true for export in onboarding to improve activation, and how would we know within two weeks?”

That means asking sharper questions. It means writing better acceptance criteria. It means spotting where a prototype created false confidence. It means knowing when a polished answer is still strategically wrong.

In other words, the PM job becomes more editorial and more operational at the same time. You are not just deciding what to build. You are designing the system that decides whether what got built deserves to survive.

Use the suit, don’t worship it

AI does feel like a superpower. That part is not hype.

But superpowers are only useful if you can aim them.

The right metaphor is not replacement. An exoskeleton can help you lift more, move faster, and cover ground that used to be out of reach. But it does not tell you where to go, what is safe to carry, or whether the structure you are building is worth keeping.

That judgment is still your job.

If AI is giving your team more output than ever, the relevant question is no longer “How do we use it more?”

It is “What decision gates do we need so that faster output becomes better work?”

What’s the takeaway? You’re not the bottleneck any more, but your decisions are stopping progress from happening. Set your criteria before you build, check against them after, and own the decision about whether what got built deserves to survive.